BlackBox Models

Black box models, or models which can be viewed in terms of its "inputs and outputs, without any knowledge of its internal workings”“have advanced in popularity. Initially, black box models proved effective, as they stemmed from the historical use of machine learning in society which did not necessarily deep impact human lives. However, as artificial intelligence and machine learning become more powerful, models must maintain a high level of performance whilst enabling human users to “understand, appropriately trust, and efficiently manage the emerging generation of artificially intelligent partners.” However, especially as it comes to high stakes situations in which trust and transparency is necessary, explainable artificial intelligence is necessary - we aim to provide this transparency and stray away from black box models.

"The belief that accuracy must be sacrificed for interpretability is inaccurate."

Rudin & Radin

Coronavirus

As of early February, Coronavirus (known as Covid-19) has infected over 100 million individuals throughout the world, and has killed over 2.5 million, nearly 500,000 of those being Americans. According to the CDC, Covid-19 spreads most commonly through close contact, primarily when individuals with COVID-19 “cough, sneeze, sing, talk, or breathe” as the virus is transmitted through respiratory droplets. Moreover, these viruses may infect others not in close contact through airborne transmission. According to John Brooks, a medical epidemiologist at the CDC in Atlanta, “masks bring down the community viral load and are used to protect others, rather than oneself. Masks are imperative to helping prevent the spread of coronavirus; however, they must also be worn properly.

Our Approach

The Dataset

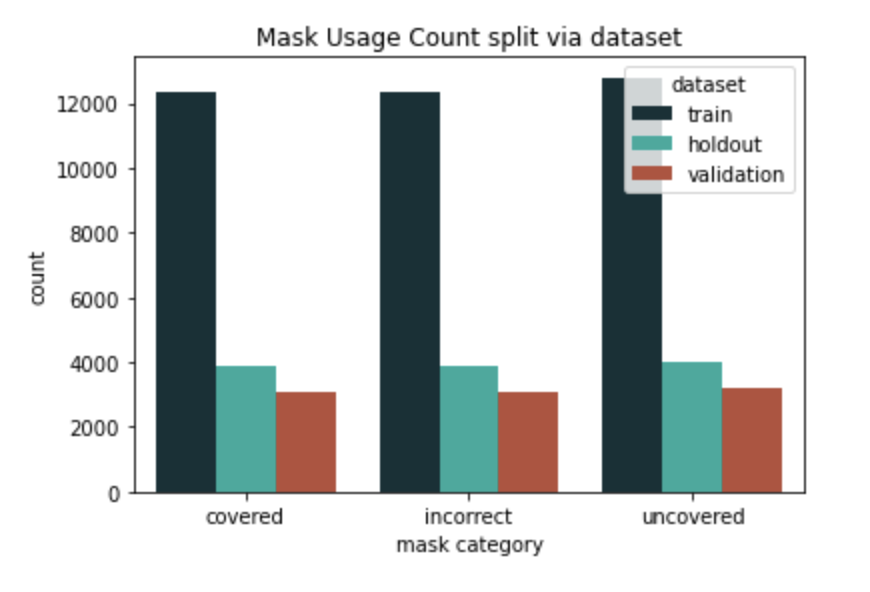

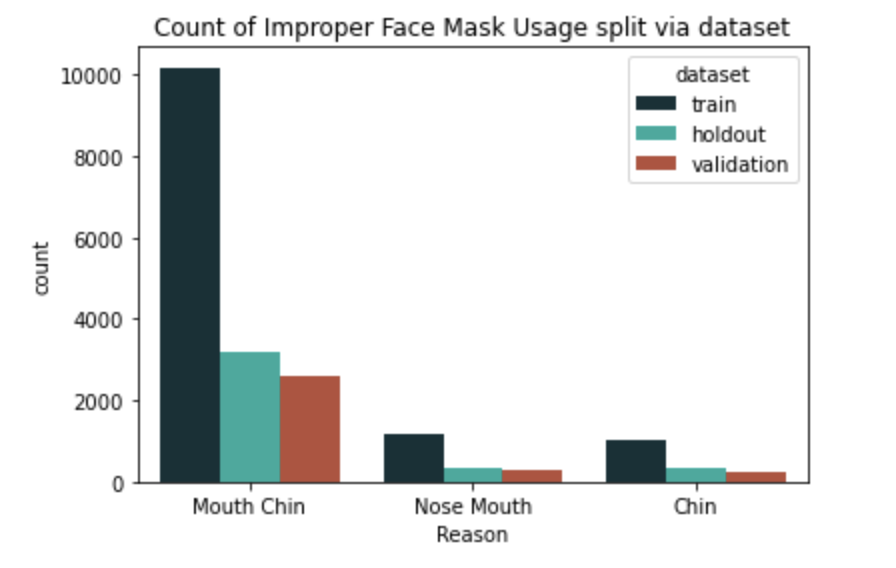

A subsection of the MaskedFace-Net dataset was utilized in order to train our model. The dataset was created from the Flickr-Face-HQ dataset, and has a medical blue face mask photoshopped onto various individuals, making it an artificially created dataset. The dataset itself is a cumulation of 58,582 images of properly masked faces, improperly masked faces, and unmasked faces. Of the improperly masked faces, they are further split into three categories: masks where the Mouth and Chin were covered properly, the Nose and Mouth were covered properly, and only the chin was covered properly. For a face mask to be considered properly worn, the mask must cover the nose, chin, and mouth.

A histogram of the count of correctly worn masks, incorrectly worn masks, and no mask split via training, validation, and test set.

A histogram of the count of incorrectly worn masks and why; Mouth/Chin means the mouth and chin were covered, but nose was not. Likewise for remainder of categories.

The Model

The model architecture used was the Inception Resnet V1, which is a variance of the original FaceNet Model. We utilized an untrained model on three classes" improperly worn, properly worn, and no mask at all. In terms of image processing, the images were resized to 256 x 256, and transformed into tensors; Inception ResNet V1 works best with images that are cropped to the face; as our dataset had a pre-cropped image of faces already, this did not prove to be an issue. However, the model performance may change due to this. More information on this can be found in our discussion section below. Ultimately, our model received an accuracy of 0.96.

GradCAM

GradCAM, or Gradient weighted Class Activation Mapping was utilized in order to provide transparency. GradCam utilizes gradient information from the last convolutional layer of our CNN and ultimately results in a coarse heat map. Essentially, GradCAM provides insight on where our model is looking specifically to make the decisions that is makes. Sample images of GradCAM outputs on several of the dataset images are shown below.

Our Results and Discussion

Overall, our model was fairly successful as we were able to reach 96% accuracy. However, there were several weaknesses in our model. To begin with: the dataset only contains blue medical face masks - it does not contain cloth masks, KN95, or N-95 masks, which are also commonly used in the Covid-19 pandemic; therefore, we are not sure how our model may perform given another face mask. This weakness can be attributed to the lack of datasets available on face masks - although there are several datasets with a variety of face masks in various settings, many did not include improper face mask usage and only had the two categories: mask or no mask. Furthermore, the dataset consisted of photoshopped face masks on cropped faces: ultimately, this is an artificially made dataset. Individuals who wear masks most likely do not do so in an manner as perfectly as a mask photoshopped onto an individual, nor will images be perfectly cropped to the face as this dataset was. Another weakness would be the accuracy of 96%; although it is fairly high, considering the high stakes situation, having 4% inaccurate classifications could have detrimental effects (such as if someone who was Covid-19 positive did not wear a mask properly, and it was deemed proper usage by our model). Finally, due to computational and time constraints, we were not able to implement our model in real time, which would be necessary if one were to use our model to scale it for a business or school. However, regardless of various weaknesses, we were able to complete our goal of having a model that users could trust, especially after a successful GradCAM implementation.